2018.5.5更新:使用word2vec作为输入进行训练

使用Word2Vec

使用word2vec训练其实早已写完代码,一直没有整理上来。先将修改后的代码放到这里,以后有时间再介绍修改过程。

import tensorflow as tf

import numpy as np

class TextCNN(object):

def __init__(

self, sequence_length, num_classes, vocab_size,

embedding_size, filter_sizes, num_filters, l2_reg_lambda=0.0):

# Placeholders for input, output and dropout

self.input_x = tf.placeholder(tf.int32, [None, sequence_length], name="input_x")

self.input_y = tf.placeholder(tf.float32, [None, num_classes], name="input_y")

self.dropout_keep_prob = tf.placeholder(tf.float32, name="dropout_keep_prob")

self.learning_rate = tf.placeholder(tf.float32, name='learning_rate')

# Keeping track of l2 regularization loss (optional)

l2_loss = tf.constant(0.0)

# Embedding layer

with tf.device('/cpu:0'), tf.name_scope("embedding"):

self.W = tf.Variable(

tf.random_uniform([vocab_size, embedding_size], -1.0, 1.0),

name="W")

self.embedded_chars = tf.nn.embedding_lookup(self.W, self.input_x)

self.embedded_chars_expanded = tf.expand_dims(self.embedded_chars, -1)

# Create a convolution + maxpool layer for each filter size

pooled_outputs = []

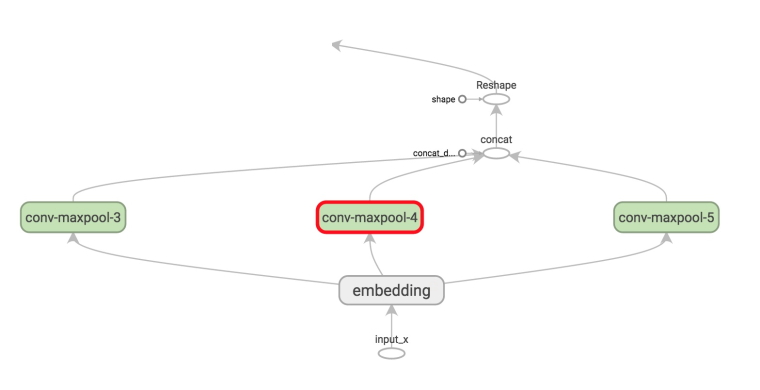

for i, filter_size in enumerate(filter_sizes):

with tf.name_scope("conv-maxpool-%s" % filter_size):

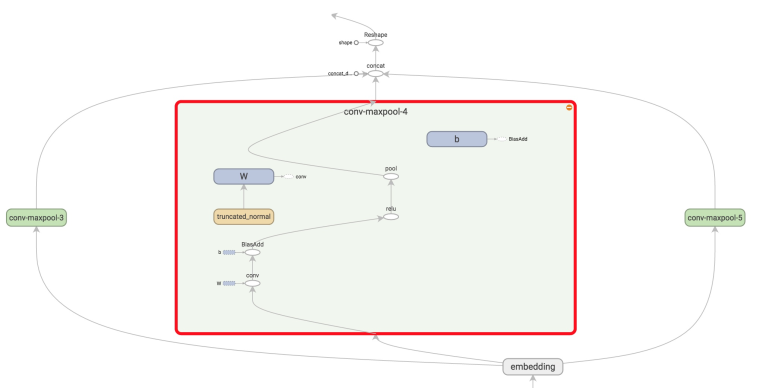

# Convolution Layer

filter_shape = [filter_size, embedding_size, 1, num_filters]

W = tf.Variable(tf.truncated_normal(filter_shape, stddev=0.1), name="W")

b = tf.Variable(tf.constant(0.1, shape=[num_filters]), name="b")

conv = tf.nn.conv2d(

self.embedded_chars_expanded,

W,

strides=[1, 1, 1, 1],

padding="VALID",

name="conv")

# Apply nonlinearity

h = tf.nn.relu(tf.nn.bias_add(conv, b), name="relu")

# Maxpooling over the outputs

pooled = tf.nn.max_pool(

h,

ksize=[1, sequence_length - filter_size + 1, 1, 1],

strides=[1, 1, 1, 1],

padding='VALID',

name="pool")

pooled_outputs.append(pooled)

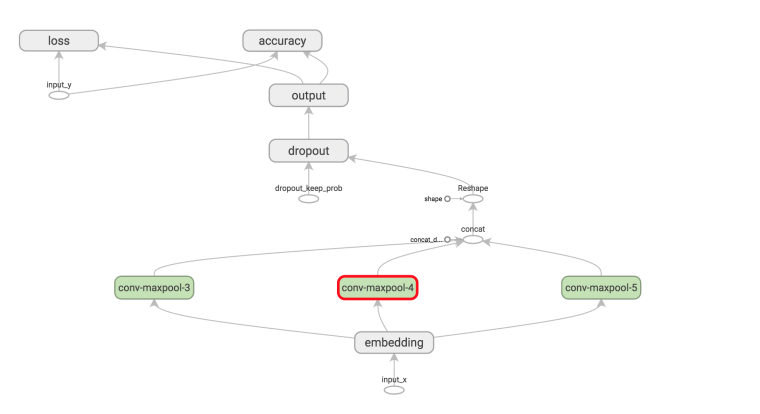

# Combine all the pooled features

num_filters_total = num_filters * len(filter_sizes)

self.h_pool = tf.concat(pooled_outputs, 3)

self.h_pool_flat = tf.reshape(self.h_pool, [-1, num_filters_total])

# Add dropout

with tf.name_scope("dropout"):

self.h_drop = tf.nn.dropout(self.h_pool_flat, self.dropout_keep_prob)

# Final (unnormalized) scores and predictions

with tf.name_scope("output"):

W = tf.get_variable(

"W",

shape=[num_filters_total, num_classes],

initializer=tf.contrib.layers.xavier_initializer())

b = tf.Variable(tf.constant(0.1, shape=[num_classes]), name="b")

l2_loss += tf.nn.l2_loss(W)

l2_loss += tf.nn.l2_loss(b)

self.scores = tf.nn.xw_plus_b(self.h_drop, W, b, name="scores")

self.predictions = tf.argmax(self.scores, 1, name="predictions")

# CalculateMean cross-entropy loss

with tf.name_scope("loss"):

losses = tf.nn.softmax_cross_entropy_with_logits(logits=self.scores, labels=self.input_y)

self.loss = tf.reduce_mean(losses) + l2_reg_lambda * l2_loss

# Accuracy

with tf.name_scope("accuracy"):

correct_predictions = tf.equal(self.predictions, tf.argmax(self.input_y, 1))

self.accuracy = tf.reduce_mean(tf.cast(correct_predictions, "float"), name="accuracy")

import numpy as np

import re

import jieba

import itertools

from collections import Counter

from tensorflow.contrib import learn

from gensim.models import Word2Vec

def clean_str(string):

"""

Tokenization/string cleaning for all datasets except for SST.

Original taken from https://github.com/yoonkim/CNN_sentence/blob/master/process_data.py

"""

string = re.sub(r"[^A-Za-z0-9(),!?\'\`]", " ", string)

string = re.sub(r"\'s", " \'s", string)

string = re.sub(r"\'ve", " \'ve", string)

string = re.sub(r"n\'t", " n\'t", string)

string = re.sub(r"\'re", " \'re", string)

string = re.sub(r"\'d", " \'d", string)

string = re.sub(r"\'ll", " \'ll", string)

string = re.sub(r",", " , ", string)

string = re.sub(r"!", " ! ", string)

string = re.sub(r"\(", " \( ", string)

string = re.sub(r"\)", " \) ", string)

string = re.sub(r"\?", " \? ", string)

string = re.sub(r"\s{2,}", " ", string)

return string.strip().lower()

def load_data_and_labels(train_file_path, test_file_path):

"""

获取训练与测试数据

:param train_file_path:

:param test_file_path:

:return: 分词后的训练句子列表, 训练分类列表, 分词后的测试句子列表, 测试分类列表

"""

# Load data from files

x_train = list() # 训练数据

y_train_data = list() # y训练分类数据

x_test = list() # 测试数据

y_test_data = list() # y测试分类数据

y_labels = list() # 分类集

# 读取训练数据

with open(train_file_path, 'r', encoding='utf-8') as train_file:

for line in train_file.read().split('\n'):

sp = line.split('||')

if len(sp) != 2:

continue

x_train.append(' '.join(jieba.cut(sp[0])))

y_train_data.append(sp[1])

# 读取测试数据

with open(test_file_path, 'r', encoding='utf-8') as test_file:

for line in test_file.read().split('\n'):

sp = line.split('||')

if len(sp) != 2:

continue

x_test.append(' '.join(jieba.cut(sp[0])))

y_test_data.append(sp[1])

# 构建分类列表

for item in y_train_data:

if item not in y_labels:

y_labels.append(item)

labels_len = len(y_labels)

print('分类数为: ', labels_len)

# 构建训练y

y_train = np.zeros((len(y_train_data), labels_len), dtype=np.int)

for index in range(len(y_train_data)):

y_train[index][y_labels.index(y_train_data[index])] = 1

# 构建测试y

y_test = np.zeros((len(y_test_data), labels_len), dtype=np.int)

for index in range(len(y_test_data)):

y_test[index][y_labels.index(y_test_data[index])] = 1

return [x_train, y_train, x_test, y_test, y_labels]

def load_train_dev_data(train_file_path, test_file_path):

x_train_text, y_train, x_test_text, y_test, _ = load_data_and_labels(train_file_path, test_file_path)

# Load data

print("Loading data...")

# Build vocabulary

max_train_document_length = max([len(x.split(" ")) for x in x_train_text])

max_test_document_length = max([len(x.split(" ")) for x in x_test_text])

max_document_length = max_test_document_length \

if max_test_document_length > max_train_document_length \

else max_train_document_length

# 使用VocabularyProcessor处理输入

vocab_processor = learn.preprocessing.VocabularyProcessor(max_document_length)

x_train = np.array(list(vocab_processor.fit_transform(x_train_text)))

x_test = np.array(list(vocab_processor.fit_transform(x_test_text)))

# Randomly shuffle data -- 随机搅乱数据

np.random.seed(10)

shuffle_indices = np.random.permutation(np.arange(len(y_train)))

x_train = x_train[shuffle_indices]

y_train = y_train[shuffle_indices]

print("Vocabulary Size: {:d}".format(len(vocab_processor.vocabulary_)))

print("Train/Dev split: {:d}/{:d}".format(len(y_train), len(y_test)))

return x_train, y_train, x_test, y_test, vocab_processor

def load_embedding_vectors_word2vec(vocabulary, filename, binary):

word2vec_model = Word2Vec.load(filename)

embedding_vectors = np.random.uniform(-0.25, 0.25, (len(vocabulary), 200))

for word in word2vec_model.wv.vocab:

idx = vocabulary.get(word)

if idx != 0:

embedding_vectors[idx] = word2vec_model[word]

return embedding_vectors

def batch_iter(data, batch_size, num_epochs, shuffle=True):

"""

Generates a batch iterator for a dataset.

"""

data = np.array(data)

data_size = len(data)

num_batches_per_epoch = int((len(data)-1)/batch_size) + 1

for epoch in range(num_epochs):

# Shuffle the data at each epoch

if shuffle:

shuffle_indices = np.random.permutation(np.arange(data_size))

shuffled_data = data[shuffle_indices]

else:

shuffled_data = data

for batch_num in range(num_batches_per_epoch):

start_index = batch_num * batch_size

end_index = min((batch_num + 1) * batch_size, data_size)

yield shuffled_data[start_index:end_index]

#! /usr/bin/env python

import tensorflow as tf

import numpy as np

import os

import time

import datetime

import data_helpers

from text_cnn import TextCNN

from tensorflow.contrib import learn

import yaml

# Parameters

# ==================================================

# Data loading params

tf.app.flags.DEFINE_float("dev_sample_percentage", .1, "Percentage of the training data to use for validation")

tf.app.flags.DEFINE_string("train_file", "../data/train_data.txt", "Train file source.")

tf.app.flags.DEFINE_string("test_file", "../data/test_data.txt", "Test file source.")

# Model Hyperparameters

tf.app.flags.DEFINE_integer("embedding_dim", 128, "Dimensionality of character embedding (default: 128)")

tf.app.flags.DEFINE_string("filter_sizes", "3,4,5", "Comma-separated filter sizes (default: '3,4,5')")

tf.app.flags.DEFINE_integer("num_filters", 128, "Number of filters per filter size (default: 128)")

tf.app.flags.DEFINE_float("dropout_keep_prob", 0.5, "Dropout keep probability (default: 0.5)")

tf.app.flags.DEFINE_float("l2_reg_lambda", 0.0, "L2 regularization lambda (default: 0.0)")

# Training parameters

tf.app.flags.DEFINE_integer("batch_size", 128, "Batch Size (default: 64)")

tf.app.flags.DEFINE_integer("num_epochs", 100, "Number of training epochs (default: 200)")

tf.app.flags.DEFINE_integer("evaluate_every", 100, "Evaluate model on dev set after this many steps (default: 100)")

tf.app.flags.DEFINE_integer("checkpoint_every", 1000, "Save model after this many steps (default: 100)")

tf.app.flags.DEFINE_integer("num_checkpoints", 5, "Number of checkpoints to store (default: 5)")

# Misc Parameters

tf.app.flags.DEFINE_boolean("allow_soft_placement", True, "Allow device soft device placement")

tf.app.flags.DEFINE_boolean("log_device_placement", False, "Log placement of ops on devices")

FLAGS = tf.app.flags.FLAGS

print("\nParameters:")

for attr, value in sorted(FLAGS.__flags.items()):

print("{}={}".format(attr.upper(), value))

print("")

# Data Preparation

# ==================================================

# Load data

with open("config.yml", 'r') as ymlfile:

cfg = yaml.load(ymlfile)

print("Loading data...")

x_train, y_train, x_test, y_test, vocab_processor = data_helpers.load_train_dev_data(FLAGS.train_file, FLAGS.test_file)

embedding_name = cfg['word_embeddings']['default']

embedding_dimension = cfg['word_embeddings'][embedding_name]['dimension']

# Training

# ==================================================

with tf.Graph().as_default():

session_conf = tf.ConfigProto(

allow_soft_placement=FLAGS.allow_soft_placement,

log_device_placement=FLAGS.log_device_placement)

sess = tf.Session(config=session_conf)

with sess.as_default():

cnn = TextCNN(

sequence_length=x_train.shape[1],

num_classes=y_train.shape[1],

vocab_size=len(vocab_processor.vocabulary_),

embedding_size=embedding_dimension,

filter_sizes=list(map(int, FLAGS.filter_sizes.split(","))),

num_filters=FLAGS.num_filters,

l2_reg_lambda=FLAGS.l2_reg_lambda)

cnn.learning_rate = 0.01

# Define Training procedure

global_step = tf.Variable(0, name="global_step", trainable=False)

optimizer = tf.train.AdamOptimizer(cnn.learning_rate)

grads_and_vars = optimizer.compute_gradients(cnn.loss)

train_op = optimizer.apply_gradients(grads_and_vars, global_step=global_step)

# Keep track of gradient values and sparsity (optional)

grad_summaries = []

for g, v in grads_and_vars:

if g is not None:

grad_hist_summary = tf.summary.histogram("{}/grad/hist".format(v.name), g)

sparsity_summary = tf.summary.scalar("{}/grad/sparsity".format(v.name), tf.nn.zero_fraction(g))

grad_summaries.append(grad_hist_summary)

grad_summaries.append(sparsity_summary)

grad_summaries_merged = tf.summary.merge(grad_summaries)

# Output directory for models and summaries

timestamp = str(int(time.time()))

out_dir = os.path.abspath(os.path.join(os.path.curdir, "runs", timestamp))

print("Writing to {}\n".format(out_dir))

# Summaries for loss and accuracy

loss_summary = tf.summary.scalar("loss", cnn.loss)

acc_summary = tf.summary.scalar("accuracy", cnn.accuracy)

# Train Summaries

train_summary_op = tf.summary.merge([loss_summary, acc_summary, grad_summaries_merged])

train_summary_dir = os.path.join(out_dir, "summaries", "train")

train_summary_writer = tf.summary.FileWriter(train_summary_dir, sess.graph)

# Dev summaries

dev_summary_op = tf.summary.merge([loss_summary, acc_summary])

dev_summary_dir = os.path.join(out_dir, "summaries", "dev")

dev_summary_writer = tf.summary.FileWriter(dev_summary_dir, sess.graph)

# Checkpoint directory. Tensorflow assumes this directory already exists so we need to create it

checkpoint_dir = os.path.abspath(os.path.join(out_dir, "checkpoints"))

checkpoint_prefix = os.path.join(checkpoint_dir, "model")

if not os.path.exists(checkpoint_dir):

os.makedirs(checkpoint_dir)

saver = tf.train.Saver(tf.global_variables(), max_to_keep=FLAGS.num_checkpoints)

# Write vocabulary

vocab_processor.save(os.path.join(out_dir, "vocab"))

# Initialize all variables

sess.run(tf.global_variables_initializer())

vocabulary = vocab_processor.vocabulary_

initW = data_helpers.load_embedding_vectors_word2vec(vocabulary,

cfg['word_embeddings']['word2vec']['path'],

cfg['word_embeddings']['word2vec']['binary'])

print(initW.shape)

sess.run(cnn.W.assign(initW))

def train_step(x_batch, y_batch):

"""

A single training step

"""

feed_dict = {

cnn.input_x: x_batch,

cnn.input_y: y_batch,

cnn.dropout_keep_prob: FLAGS.dropout_keep_prob

}

_, step, summaries, loss, accuracy = sess.run(

[train_op, global_step, train_summary_op, cnn.loss, cnn.accuracy],

feed_dict)

time_str = datetime.datetime.now().isoformat()

print("{}: step {}, loss {:g}, acc {:g}".format(time_str, step, loss, accuracy))

train_summary_writer.add_summary(summaries, step)

def dev_step(x_batch, y_batch, writer=None):

"""

Evaluates model on a dev set

"""

feed_dict = {

cnn.input_x: x_batch,

cnn.input_y: y_batch,

cnn.dropout_keep_prob: 1.0

}

step, summaries, loss, accuracy = sess.run(

[global_step, dev_summary_op, cnn.loss, cnn.accuracy],

feed_dict)

time_str = datetime.datetime.now().isoformat()

print("{}: step {}, loss {:g}, acc {:g}".format(time_str, step, loss, accuracy))

if writer:

writer.add_summary(summaries, step)

if step % FLAGS.batch_size == 0:

print('epoch ', step % FLAGS.batch_size)

# Generate batches

batches = data_helpers.batch_iter(

list(zip(x_train, y_train)), FLAGS.batch_size, FLAGS.num_epochs)

# Training loop. For each batch...

for batch in batches:

x_batch, y_batch = zip(*batch)

train_step(x_batch, y_batch)

current_step = tf.train.global_step(sess, global_step)

if current_step % FLAGS.evaluate_every == 0:

print("\nEvaluation:")

dev_step(x_test, y_test, writer=dev_summary_writer)

print("")

if current_step % FLAGS.checkpoint_every == 0:

path = saver.save(sess, checkpoint_prefix, global_step=current_step)

print("Saved model checkpoint to {}\n".format(path))

如果没有找到其他合适的表,不妨试试这个:

如果没有找到其他合适的表,不妨试试这个: